Processing Files with Docker Volumes

Docker can be a great tool to process workloads, like optimising images, compiling source code or to do anything that has some very specific requirements. In this post we'll go over some examples on how to automate some workflows in containers.

I've written about an a bit more niche case in the past about updating php apps with breaking version changes, where I made use of a similar method, but you can do it purely with docker and mounting volumes as well.

Containers are great for having reproducable runtimes, but it's also a great place to stick everything you really don't want to deal with on your local development setup. You just need to automate once and can pass it on or dig it up when you need it, instead of taking great care of which python, node or whatever version is installed on your system, or even worse, to make sure you have that installed on all of your systems.

Creating your Docker Image

For this example we'll resize and convert some pictures, because it comes with some dependencies that we'll need in our image and we'll need to mount a volume to supply the running container with input.

Let's say we want to transform some images from jpg to webp for this case, we'd create a Dockerfile like this:

FROM alpine:3.16

RUN apk add --update \

libwebp-tools \

bash

# create "in container" directories for source and destination images

RUN mkdir -p /var/app/input

RUN mkdir -p /var/app/output

WORKDIR /var/app

RUN ls

RUN pwd

RUN ls /var/app/input

COPY run.sh .

CMD /var/app/run.sh

The script we're going to run is the following:

#!/bin/bash

for picture in input/*.jpg; do

echo "processing $picture"

cwebp -q 90 -resize 1600 0 $picture -o output/$(basename $picture .jpg).webp

done

ls -lash input/*

ls -lash output/*

To build the container, we'll run this and save it to docker-build.sh

#!/bin/bash

mkdir input

mkdir output

docker build -t image-optimise .

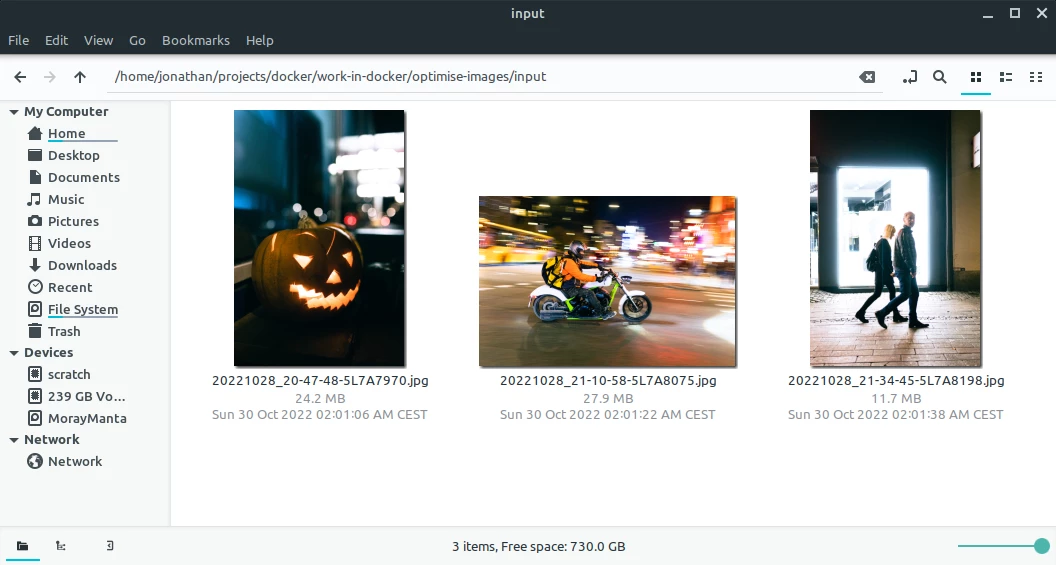

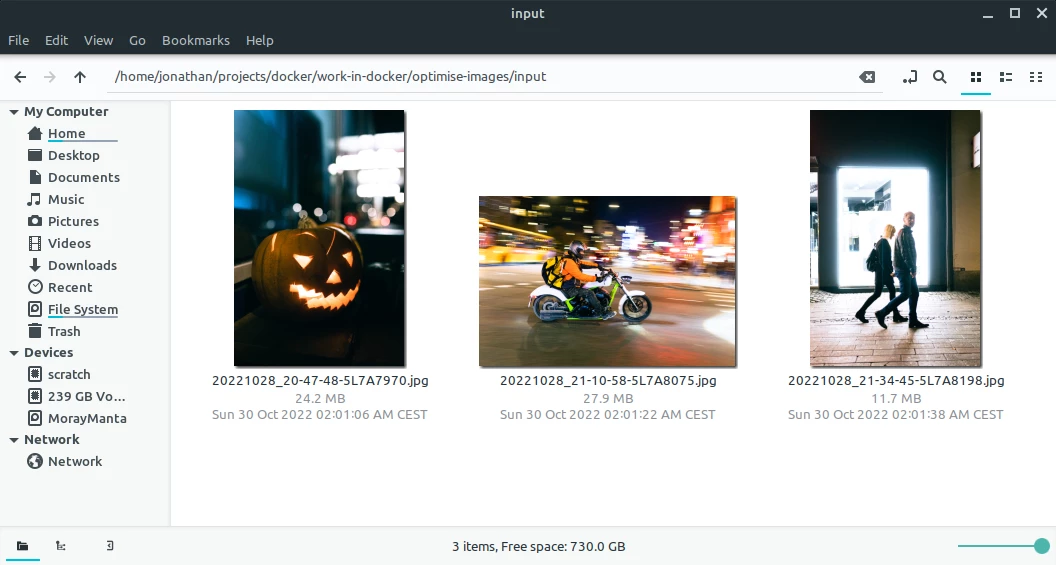

Now we just need some pictures to drop into the input directory, let's take some full size street photography from the other day:

These images are HUGE, between 12 and 24 MB each, so resizing them sounds like a good plan, otherwise I'll get angry comments again that I'm burning through people's cell phone plans.

Mounting Volumes into your Docker Container

In oder to make the files on our host file system available inside the docker container, we can run it with attached volumes. You might already know volumes from using docker-compose with volumes for your database server or similar, they're forwarding a port, but instead we make a little part of our file system available.

Volumes follow the host:container pattern so we'll create a little bash script to run our container as well, docker-run.sh:

#!/bin/bash

docker run \

-v "$PWD/input":/var/app/input \

-v "$PWD/output":/var/app/output \

image-optimise

Outside of the container the path is: /home/jonathan/projects/docker/optimise-images/input, inside the container the path to the "linked" folder is: /var/app/input.

We're going to mount two volumes, one for the input, one for the output and lastly we'll supply the name of the image to run.

Running your Docker Image

I tend to juggle a few different docker containers, so I like to put my build and run commands in shell scripts, so that I don't need to remember the oddities and names of each one every time, so to run both build and run script we need to remember to assign the +x flag:

chmod +x *.sh

and then we can run:

./docker-build.sh && ./docker-run.sh

and we'll see output of first building the image:

Sending build context to Docker daemon 65.53MB

Step 1/10 : FROM alpine:3.16

---> 9c6f07244728

Step 2/10 : RUN apk add --update libwebp-tools bash

---> Using cache

---> a2ea3667de16

Step 3/10 : RUN mkdir -p /var/app/input

---> Using cache

---> c2543f93fc67

[...]

---> Using cache

---> a19ceb836203

Step 10/10 : CMD /var/app/run.sh

---> Using cache

---> f6c34207c17e

Successfully built f6c34207c17e

Successfully tagged image-optimise:latest

and then the run, which will contain some output from cwebp actually resizing the images:

Output: 863108 bytes Y-U-V-All-PSNR 43.88 49.80 49.49 45.10 dB

(1.80 bpp)

block count: intra4: 13857 (91.77%)

intra16: 1243 (8.23%)

skipped: 1540 (10.20%)

bytes used: header: 512 (0.1%)

mode-partition: 74321 (8.6%)

Residuals bytes |segment 1|segment 2|segment 3|segment 4| total

macroblocks: | 5%| 18%| 32%| 45%| 15100

quantizer: | 12 | 12 | 9 | 6 |

filter level: | 4 | 3 | 2 | 3 |

23.1M Oct 30 00:01 input/20221028_20-47-48-5L7A7970.jpg

496.4K Nov 3 21:08 output/20221028_20-47-48-5L7A7970.webp

As we can see we reduced the image size from 23.1 MB to under 0.5 MB, which is awesome.

Image optimisation is just one example of what you can process in docker and spit back out to your local hard drive through mounted volumes.

I'm going to remember stuffing things in a container whenever some dependency is just TOO ANNOYING to be maintained in a healthy state on a local system.